Review

This is the next post in the series, looking first at Reactive Extensions (RX) to simplify writing Windows Forms UI logic, then using a viewmodel shared between a WPF gui implementation and a rewritten WinForms version using ReactiveUI, stopping at a short article on testing the viewmodels.

ReactiveUI News

ReactiveUI API has been quite volatile for the last year, and this series is in need of an update[0. See ReactiveUI Design Guidelines]. A CodeProject author gardner9032 published a nice teaser article, showing the ReactiveUI mechanism for writing simplified Viewmodel-View bindings [1. see article @CodeProject], which serves as primary trigger for this post.

There’s plenty of news and updated articles on Paul Betts’ log, providing a good resource for updates on the API. Phil Haack’s blog is also a superb resource for explanations and commentaries on the use of ReactiveUI in real-world applications.

The ReactiveUI project is quite active, as the community seems to have grokked the jist of it, while the list of supported platforms has become more than convincing.

Getting rid of events

The enabling feature of ReactiveUI is writing declarative UI glue code, and if the viewmodels are based on Reactive Extensions, then declarative C# style can be used throughout the project. The previous ReactiveUI Windows Forms examples converted an event sequence into an observable sequence of values. In this example, that will be accomplished conveniently by the ReactiveUI WinForms lbrary. The viewmodels also contained some imperative code. For this article, the viewmodels will not be reused from the previous articles, but written from scratch.

ViewModel

s. code

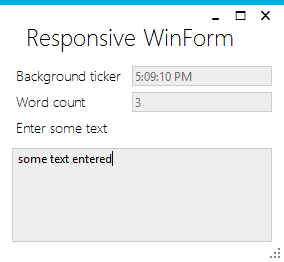

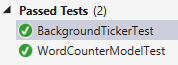

The viewmodel’s task is the same: something is ticking in the background, while words are counted in the input text asynchronously, and the calculation is throttled to 0.5 seconds. The viewmodel boilerplate is simplified using ReactiveUI.ReactiveObject.

Output (read-only) properties

The ReactiveUI-way of creating output properties is through ObservableAsPropertyHelper.

private readonly ObservableAsPropertyHelper<string> backgroundTicker;

public string BackgroundTicker

{

get

{

return backgroundTicker.Value;

}

//...

}

The constructor of the viewmodel receives an IScheduler for scheduling on the correct thread, and an IObservable, which will be a stream of input from the view. Observe the ToProperty helper:

public MyViewModel(IScheduler scheduler, IObservable<string> input)

{

Observable.Interval(TimeSpan.FromSeconds(1))

.ObserveOn(scheduler)

.Select(_ => DateTime.Now.ToLongTimeString())

.ToProperty(this, x => x.BackgroundTicker, out backgroundTicker);

//...

}

Word counting logic is implemented in a similar fashion by transforming the incoming stream of strings.

View

s. code

To remove yet more boilerplate code, WinForms Form specialization implements the ReactiveUI.IViewFor interface. This allows for largely simplified run-time and compile-time checked bindings, avoiding using strings for property names. The implementation is straightforward, and pays off once the views become more complex than this example:

IViewFor

public MyViewModel VM { get; private set; }

object IViewFor.ViewModel

{

get { return VM; }

set { VM = (MyViewModel)value; }

}

MyViewModel IViewFor<MyViewModel>.ViewModel

{

get { return VM; }

set { VM = value; }

}

Bindings

None of the controls in the designed WinForm have wired events or bindings set from the designer. The glue code is reduced to instantiating the viewmodel …

VM = new MyViewModel(

new System.Reactive.Concurrency.ControlScheduler(this),

this.WhenAnyValue(x => x.inputBox.Text)

);

… and declaring the bindings[2. The ReactiveUI WinForms implementation seems not to support fully read-only fields using default bindings yet, hence an empty setter in the viewmodel] [3. The scheduler is from Windows Forms helpers].

this.Bind(VM, x => x.BackgroundTicker, x => x.tickerBox.Text); this.Bind(VM, x => x.WordCount, x => x.wordCountBox.Text);